Hummina Shadeeba said:

“it requires 2.47 watts of power to raise the temperature of one gallon of water one degree in one hour."

Why is time in the equation?

Because it takes time to do work. Nothing happens instantaneously. The faster you want something to happen, the more power (over the shorter time you have) it takes to do that. Technically for the above equation, it would be 2.47Wh, not 2.47W, because you would be applying the 2.47W continuously for the entire hour.

Here's some basic math that could be wrong (me and numbers do not always get along), and I'm assuming the degrees in the original formula are F not C as none of the places I saw it said one way or the other but were using non-metric numbers for most things, but may show you something of what you need:

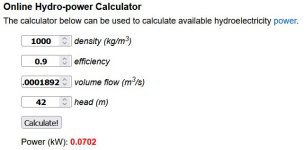

If you want to raise the temperature of one gallon of water from say, 50F (because the pipes usually run in your cold attic or slab, keeping the water cold in this season on this end of the world--could be kept even colder), to 120F, that's a 70F difference. So it would take 70 x 2.47W to change that temperature, in an hour, assuming perfect insulation on the water containment with element in it, so that there is zero heat loss. (if you include realistic heatloss, it gets a lot more complicated). That's 172.9W, applied continuously for the entire hour. Or 172.9Wh.

If you need to raise it to that temperature in a tenth of a second (which is really probably more time than the water will actually be inside the heating segment of a small point-of-use heater, so it would realistically take even more power), then you need to apply the power all in that tenth of a second, rather than over an hour. One hour is 60 minutes. 60 minutes is 360 seconds. 360 seconds is 3600 tenths of a second. So it would take 3600x the amount of power in that tenth of a second to heat the water, vs doing it in an hour...that's 622,440w.

622kW. It's still taking the same amount of energy, 172.9Wh, but applied in a much much faster time, so it takes a higher power to do it.

If you have a 10x longer heating element, for the water to contact for longer, it would take 1/10 the power. 100x longer, 1/100 the power...because there is more time for the energy to transfer from element to water before the water is pushed past the element and replaced with colder water (which is a continuous process in a point-of-use heater).

The above also assumes that you get perfect heat transfer from the heater to the water, so that the water absorbs all the energy coming out of the element, which probably won't happen either, so it would take even higher power.

The less the temperature difference that is required between incoming and outgoing water, the less energy it takes to do that work. If you only needed to change it from "room temperature" (around 72F) to 100F, that's only 28F difference, so it takes less than half the amount of energy....

I also ran across a more complete set of numbers:

The specific heat of water is 4190 J/(kg*°C). It means that it takes 4190 Joules to heat 1 kg of water by 1°C

You can then convert the units as needed, and find or make formulas to determine actual power input required to do the amount of heating you want to do.

![61VRMj4YlJL._SL1125_[1].jpg 61VRMj4YlJL._SL1125_[1].jpg](https://endless-sphere.com/sphere/data/attachments/190/190082-c74e370a97e707a6cbccc80e62383779.jpg)