thunderstorm80

1 kW

- Joined

- Mar 29, 2016

- Messages

- 383

Hi,

Now that I start working with mortal Li-Ion batteries (non A123's - My 7 year old A123 has lost only 20% of it's capacity, but sadly they are not available anymore genuinely), I have learned a lot about them and how you can greatly increase your life-cycle. (not just the known rule to top-up to less than 4.2V@cell)

One of the methods Tesla implements to increase the life-cycle count of their cells is to have a declining charging C rate during the bulk stage, until the C rate when the battery is close to become full (still in the bulk phase) is quite small.

I was reading that the Li ions are actually competing with each other, and the more you reach the end of one extreme (whether fully charge or fully discharge), then that "competition" on the respective electrode becomes more harsh. This is reflected as an increase in the IR when you move the chemistry in that direction, so if you discharge the cell in a constant current, you can feel it would get warmer towards the very end. (and the bigger voltage drop can tell that, too)

My theory is that if you have a programmed policy of a variable discharge/regen limit vs the current %SOC (State of Charge), you can avoid those issues and take care of the greatest enemy: Heat.

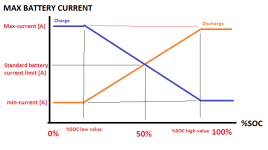

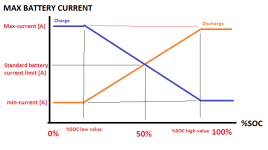

Here is a graph which explains it. Sorry for the simple Windows-Paint job:

For example, at the very beginning of charging an empty battery, the IR (Internal Resistance) for charging should be lower than the spec's IR, and so even huge surge of continuous regen currents wouldn't harm it. (this is what Tesla do - I was reading that the first half "tank" of the battery is quite short in it's duration to fill up, and once you reach 80% the charge already takes a very long time to reach 100%)

On the same note, you wouldn't want to keep using the same battery current limit when you discharge the battery when it's almost empty.

Note that before you cross the 50% SOC from either direction, you can actually draw/charge more than the "stock" battery current limit recommended by the manufacturer. I believe the spec'ed values (including IR) are for the 50% SOC, where the chemistry would act symmetrically either direction.

Min-Current and Max-Current can be programmed (for example the min-current can be defined to 0A, if someone want to fully optimize the life-cycle but reduce somewhat the usable energy spectrum of the battery), as well as the values of %SOC low&high values for the change between variable linear current-limit and the constant current.

A device like the Cycle Analyst, which knows the %SOC, could reduce the current limit linearly to zero (or a defined low figure current limit) when approaching either side of the %SOC. The user can program it's maximum battery current when starting to discharge a 100% SOC battery or regen'ing one which is at 0% SOC. It can also accept either zero current when you reach one of the edges or a defined current. (which would be much lower than the "standard" battery current)

What do you think?

I find myself already doing that manually by limiting my new non-A123 battery not to be charged more than 70%, so I can still input it short duration peaks of 1KW of regen without fearing for rapid degradation.

(of course I can charge it to 80% and even 100% if I need the range)

Perhaps the graph should behave like a exponential decay and not a linear line. I don't know for sure. I do believe that such approach, even in emprical thinking, is correct.

Now that I start working with mortal Li-Ion batteries (non A123's - My 7 year old A123 has lost only 20% of it's capacity, but sadly they are not available anymore genuinely), I have learned a lot about them and how you can greatly increase your life-cycle. (not just the known rule to top-up to less than 4.2V@cell)

One of the methods Tesla implements to increase the life-cycle count of their cells is to have a declining charging C rate during the bulk stage, until the C rate when the battery is close to become full (still in the bulk phase) is quite small.

I was reading that the Li ions are actually competing with each other, and the more you reach the end of one extreme (whether fully charge or fully discharge), then that "competition" on the respective electrode becomes more harsh. This is reflected as an increase in the IR when you move the chemistry in that direction, so if you discharge the cell in a constant current, you can feel it would get warmer towards the very end. (and the bigger voltage drop can tell that, too)

My theory is that if you have a programmed policy of a variable discharge/regen limit vs the current %SOC (State of Charge), you can avoid those issues and take care of the greatest enemy: Heat.

Here is a graph which explains it. Sorry for the simple Windows-Paint job:

For example, at the very beginning of charging an empty battery, the IR (Internal Resistance) for charging should be lower than the spec's IR, and so even huge surge of continuous regen currents wouldn't harm it. (this is what Tesla do - I was reading that the first half "tank" of the battery is quite short in it's duration to fill up, and once you reach 80% the charge already takes a very long time to reach 100%)

On the same note, you wouldn't want to keep using the same battery current limit when you discharge the battery when it's almost empty.

Note that before you cross the 50% SOC from either direction, you can actually draw/charge more than the "stock" battery current limit recommended by the manufacturer. I believe the spec'ed values (including IR) are for the 50% SOC, where the chemistry would act symmetrically either direction.

Min-Current and Max-Current can be programmed (for example the min-current can be defined to 0A, if someone want to fully optimize the life-cycle but reduce somewhat the usable energy spectrum of the battery), as well as the values of %SOC low&high values for the change between variable linear current-limit and the constant current.

A device like the Cycle Analyst, which knows the %SOC, could reduce the current limit linearly to zero (or a defined low figure current limit) when approaching either side of the %SOC. The user can program it's maximum battery current when starting to discharge a 100% SOC battery or regen'ing one which is at 0% SOC. It can also accept either zero current when you reach one of the edges or a defined current. (which would be much lower than the "standard" battery current)

What do you think?

I find myself already doing that manually by limiting my new non-A123 battery not to be charged more than 70%, so I can still input it short duration peaks of 1KW of regen without fearing for rapid degradation.

(of course I can charge it to 80% and even 100% if I need the range)

Perhaps the graph should behave like a exponential decay and not a linear line. I don't know for sure. I do believe that such approach, even in emprical thinking, is correct.