Hey guys, so I've been meaning for a long, long time now to run a set of controlled lab experiments measuring the temperature rise of hub motors being subject to different load conditions. The main objective is to develop a more comprehensive 2nd order thermal model to incorporate into the ebikes.ca simulator program for predicting the overheating and failure times of a motor. However, it will also be a great opportunity to compare decisively the effect of different cooling strategies (vent holes, active fan blades, oil filled cooling etc.) in order to quantify and model their effects too. There's been a lot of neat pioneering work by people on this forum here (not to mention a lot of lively debate), but not quite as much empirical science.

As a background, the current "overheat in" time on the simulator comes from data gathered when heating up a static, non-rotating hub motor by putting a constant current into the phase winding, and then recording the phase voltage over time. Because copper has a well characterized temperature coefficient of resistance, as the temperature increases so too does the terminal voltage when fed with a constant current supply, and so the actual copper winding temperature was easily deduced without any thermometer devices.

The process and some of the data is shown in the post here:

http://endless-sphere.com/forums/viewtopic.php?p=218321#p218321

When the experiment is done at one current, the resulting temperature rise of the motor core models accurately enough as a single lump heat capacity with a heat conductivity to ambient. And by doing a linear regression, we could get a best fit coefficients for the equivalent heat capacity and conductivity that results in the smallest error to the actual data:

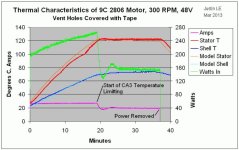

However, in addition to the fact that the motor isn't spinning which ought to greatly help with the convective cooling, this first order model also suffers from the fact that the copper windings, stator, and side cover are all lumped together and assumed to be at uniform temperature. But we know that there is quite a barrier between the stator and the side plates and their temperatures are anything but equal.

So in a more realistic situation, we would split the motor into two thermal masses. There is the stator core with the copper windings where the heat is generated, surrounded by the motor shell with it's own heat capacity. There would be one coefficient for heat transfer from the stator to the shell, and another coefficient for the transfer of energy from the shell to ambient:

As a background, the current "overheat in" time on the simulator comes from data gathered when heating up a static, non-rotating hub motor by putting a constant current into the phase winding, and then recording the phase voltage over time. Because copper has a well characterized temperature coefficient of resistance, as the temperature increases so too does the terminal voltage when fed with a constant current supply, and so the actual copper winding temperature was easily deduced without any thermometer devices.

The process and some of the data is shown in the post here:

http://endless-sphere.com/forums/viewtopic.php?p=218321#p218321

When the experiment is done at one current, the resulting temperature rise of the motor core models accurately enough as a single lump heat capacity with a heat conductivity to ambient. And by doing a linear regression, we could get a best fit coefficients for the equivalent heat capacity and conductivity that results in the smallest error to the actual data:

However, in addition to the fact that the motor isn't spinning which ought to greatly help with the convective cooling, this first order model also suffers from the fact that the copper windings, stator, and side cover are all lumped together and assumed to be at uniform temperature. But we know that there is quite a barrier between the stator and the side plates and their temperatures are anything but equal.

So in a more realistic situation, we would split the motor into two thermal masses. There is the stator core with the copper windings where the heat is generated, surrounded by the motor shell with it's own heat capacity. There would be one coefficient for heat transfer from the stator to the shell, and another coefficient for the transfer of energy from the shell to ambient: